Why Most Agencies Are Using AI to Get Faster at Being Generic

I've had the same conversation with at least a dozen agency founders in the past six months. The details change. The pattern doesn't.

One founder rolled out Claude across his entire 12-person team. Proposals that used to take five days now took one. Blog output tripled in a quarter. Another gave every developer a Cursor subscription and cut documentation time in half. A third rebuilt her content workflow around AI and published more in three months than in the previous two years.

Every one of them was excited about the speed. Every one of them told me some version of: "We're producing so much more now."

And every one of them, when I asked the follow-up question, went quiet.

The question was the same each time: "What are you saying in all that content that your competitors can't also say?"

I started pulling up their recent blog posts during calls. The titles were almost interchangeable. "How to Choose a Software Development Partner." "5 Signs Your Legacy System Needs Modernization." "The Benefits of Agile for Mid-Market Companies." Clean writing. Solid structure. Useful in a generic way. And identical in substance to what hundreds of other development agencies were publishing with the same tools.

The proposals were faster but not sharper. They still opened with "We're a full-service development agency that helps companies build scalable digital products." So did everyone else's.

Here's the part that frustrated me. These weren't generic agencies. One had spent six years building healthcare compliance systems. He'd navigated HIPAA audits, integrated with four EHR platforms, and solved edge cases around clinical workflow automation that most dev shops have never seen. Another had deep expertise in fintech payment infrastructure. A third had rebuilt legacy logistics platforms for three Fortune 500 companies.

None of that expertise was in the content. None of it was in the proposals. The AI had been given nothing specific to work with, so it produced nothing specific.

Every one of these founders had invested $10K to $20K annually in AI tooling. Competitive advantage generated across all of them: zero.

Their conclusion, almost word for word: "AI isn't really moving the needle for us."

My conclusion, every time: AI was working fine. Their agencies had nothing distinctive for it to amplify.

The Pattern Has a Name

I call it The Opinion Deficit: the gap between an agency's actual expertise and the opinions they've codified well enough for AI, or anything else, to amplify.

Opinions in a professional services context aren't hot takes or contrarian poses. They're earned insights that come from solving the same category of problem repeatedly. When you rebuild healthcare compliance systems seven times, you start to notice that the same five architectural mistakes appear in almost every legacy system. You develop a point of view about the order in which modules should be migrated. You learn that the compliance review bottleneck isn't technical, it's organizational, and you build a specific process to address it.

Those are opinions. They're specific. They're earned. And they're the thing that makes a prospect think "this agency understands my problem better than the alternatives."

Most agencies have these opinions locked inside the founder's head or scattered across project retrospectives nobody reads. They've never been named, structured, or written down in a way that anyone else, human or AI, could use.

AI doesn't fix that. AI needs something to work with. Give it your named framework for healthcare system migration and it produces content that sounds like a specialist wrote it. Give it nothing and it produces content that sounds like a generalist wrote it. Because that's what it's working from.

The Opinion Deficit is the reason agencies invest in AI and see no competitive return. The tools work. The inputs are empty.

Why Better Tools Won't Produce Better Opinions

The natural response to the Opinion Deficit is to assume you need more time with the tools. Run more prompts. Experiment with more workflows. Eventually the distinctive stuff will emerge.

It won't. And the reason is structural.

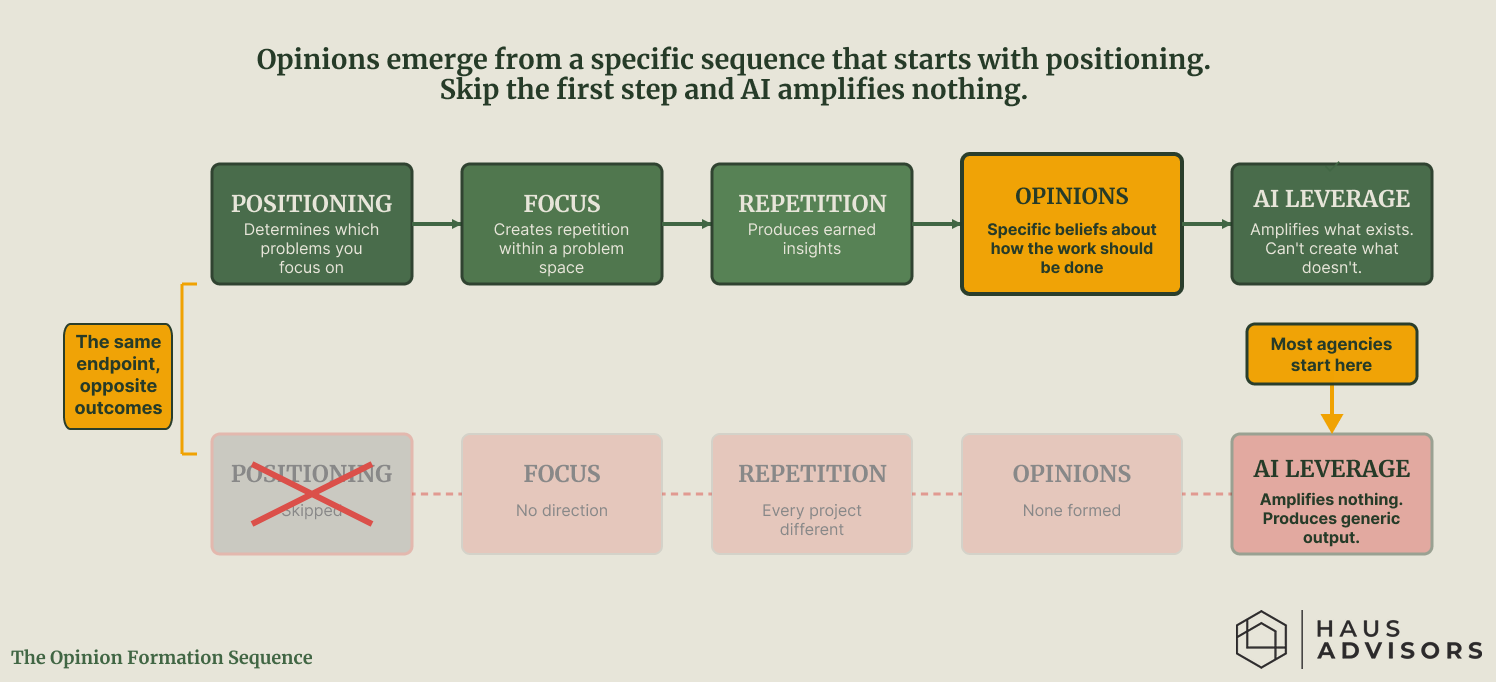

Opinions come from repetition. Repetition comes from focus. Focus comes from positioning. That sequence is causal, not optional.

Opinions come from repetition. Repetition comes from focus. Focus comes from positioning. Skip the first step and AI has nothing distinctive to amplify. Most agencies start at the end of this sequence and wonder why the tools aren't producing competitive advantage.

The healthcare agency I described has opinions because they've done the same category of work for six years. They've seen the patterns. They know which approaches fail and why. They've built shortcuts and frameworks that only exist because they've encountered the same problems dozens of times.

An agency that builds healthcare systems one quarter, ecommerce platforms the next, and internal tools the quarter after that never accumulates enough repetition in any single domain to develop those insights. They might be excellent developers. They might deliver good work. But they don't develop the kind of specific, earned perspective that separates a specialist from a generalist in the mind of a buyer.

The sequence works like this. Positioning determines which problems you focus on. Focus creates repetition within that problem space. Repetition produces earned insights you couldn't get any other way. Those insights become opinions: your specific beliefs about how the work should be done and why the common approach is wrong. Opinions get codified into named frameworks and repeatable processes. Those frameworks become the infrastructure that AI actually amplifies.

Skip the first step and the rest of the chain produces nothing. You end up with AI generating content and proposals and deliverables that are competent, generic, and indistinguishable from what every other agency with the same tools is producing.

What It Looks Like When the Foundation Exists

There's a performance marketing agency that spent over a decade building opinionated infrastructure. They didn't start with AI. They started with methodology.

They developed proprietary frameworks for forecasting, margin analysis, budget allocation, and creative demand planning. Each framework represents a specific opinion about how growth should work. Not borrowed best practices. Earned insights developed across billions of dollars in managed spend, refined across hundreds of clients, and encoded into software that any operator can use.

Their forecasting model predicts revenue within 3% of actual results on a daily basis. Their budget allocation framework has a name. Their approach to attribution has a name. Their model for creative production volume has a name. Every one of these is an opinion, written down and systematized.

Today, a single operator at that agency does the work that used to require four people. They call this person a "profit engineer." That term itself is an opinion. It says the person running your marketing should own a P&L, not a dashboard.

That operator is not unusually talented. They're a competent person sitting on top of deeply opinionated infrastructure. Unified data. Forecasting models. Named frameworks. Action tooling that automates the mechanical work so the human can focus on judgment.

The AI layer sits in the middle of their stack. Not at the bottom. Not at the top. On top of unified data, transformed models, and proven methodology. Below the human operator who makes the final calls.

Take away the opinions and the systems, and the AI produces generic output. The technology didn't create the leverage. The opinions did. The technology amplified them.

The Math That Makes This Concrete

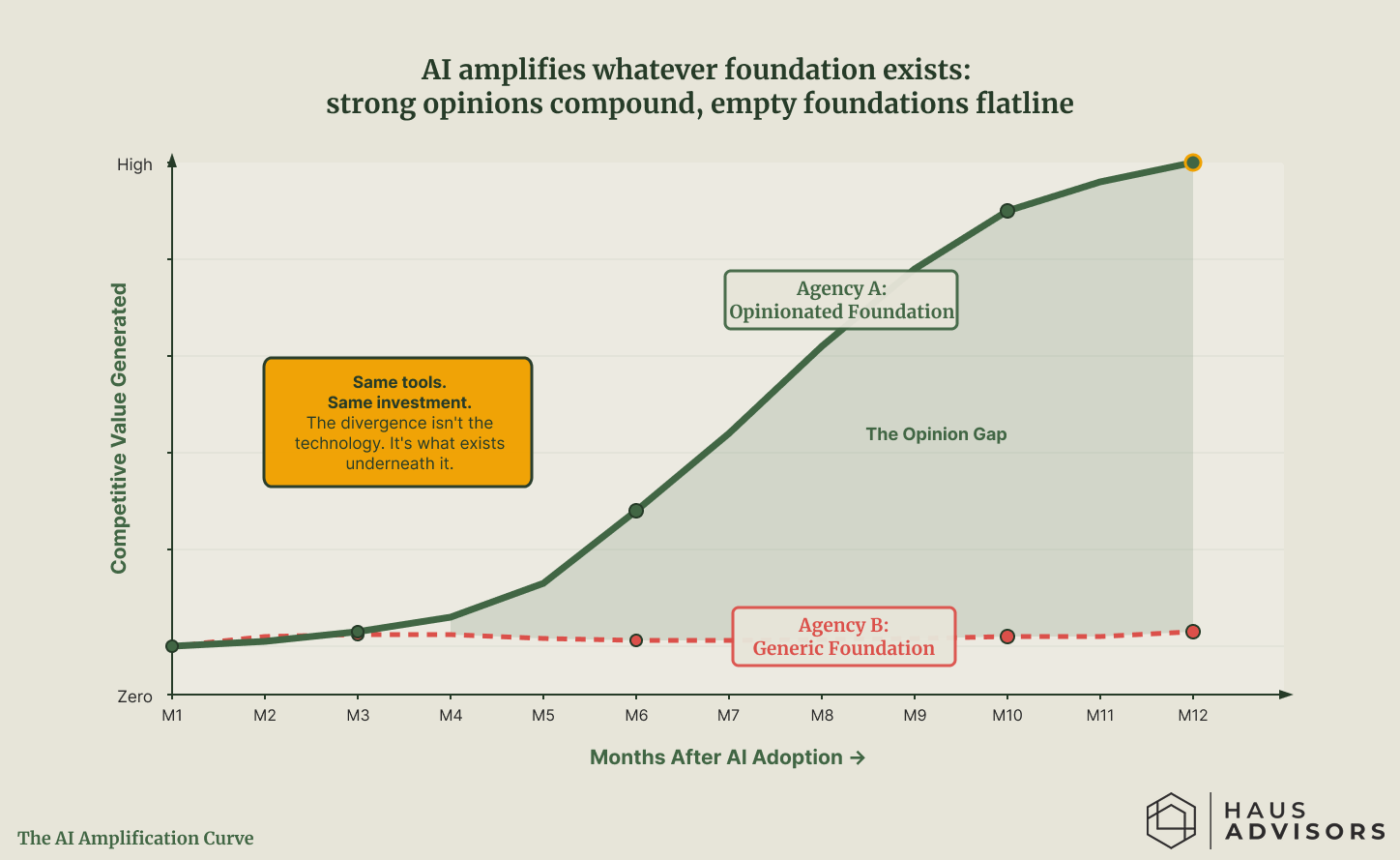

The divergence between opinionated and generic agencies isn't theoretical. It compounds in measurable ways.

Consider two development agencies. Both adopt AI in the same month. Both spend roughly the same on tooling. Both have competent teams.

Agency A spent four years focused on a single vertical. They've built named diagnostic frameworks, published 15 articles grounded in specific case studies, and developed a repeatable delivery process they can describe in detail to any prospect. When they integrate AI into their workflow, the AI produces proposals that reference their proprietary methodology, content that extends their existing body of expertise, and deliverables structured around their named processes.

Agency B is a generalist. Good work across a dozen industries. They adopt the same AI tools and start producing more output. The proposals are well written but generic. The content covers broad topics. The deliverables look professional but carry no distinctive methodology.

Both agencies adopted AI in the same month and spent the same on tooling. By month 12, the agency with a positioning foundation generated $400K in additional revenue. The agency without one generated the same revenue as the prior year, just faster. The tools didn't create the gap. The opinions underneath them did.

After 12 months:

Agency A has produced 50 pieces of content, each one reinforcing their specialist positioning. Their proposals convert at 35% because every proposal demonstrates domain expertise the prospect can't find elsewhere. Their average deal size has increased 20% because they're no longer competing on price. They're competing on insight.

Agency B has produced 80 pieces of content, more total volume, but none of it compounds. Each piece stands alone because there's no underlying framework connecting them. Their proposals convert at 15%, roughly the same as before AI. Their deal size hasn't changed because they're still being evaluated against five other generalist agencies on every opportunity.

Same tools. Same investment. Agency A generated roughly $400K in additional revenue attributable to their AI-enhanced positioning. Agency B generated the same revenue as the prior year, just with slightly lower production costs.

The tools didn't create the gap. The opinions underneath them did.

What the Opinion Deficit Actually Costs You

Faster production of undifferentiated work. The agency produces more content, more proposals, more deliverables, and none of it moves the needle because it carries no signal. The founder mistakes output volume for competitive progress.

A widening gap against opinionated competitors. This is the cost most agencies don't see until it's too late. The competitors who did the positioning work first are using the same AI tools to amplify something distinctive. Every month, their content compounds their authority. Every month, your content adds to the noise. The gap isn't closing. It's accelerating.

AI investment that produces efficiency but not advantage. The agency saves time on production. Proposals go out faster. Blog posts get written in an hour instead of a day. But saving time on generic work isn't a competitive advantage. It's a cost reduction. And cost reductions on commodity services compress margins rather than expand them.

A false sense of progress. This might be the most dangerous cost. The founder sees the volume of output, sees the time savings, and concludes that AI is working. By every activity metric, it is. By every outcome metric, nothing has changed. The activity creates the illusion of transformation without the substance of it.

Why Agencies Resist This Diagnosis

The strongest argument against what I'm describing: most agencies can't afford to spend four years building a positioning foundation before they see returns from AI. They need results now. The advice to "start with positioning" feels like being told to renovate the foundation when the roof is leaking.

That's a fair concern about speed.

Where That Logic Breaks Down

The assumption underneath that objection is that AI adoption without positioning produces results faster. It doesn't. It produces more output faster. Output and results are not the same thing.

Every founder I described at the top of this article adopted AI immediately. They moved fast. They produced more content in months than in the previous years combined. And none of it moved the needle because the content carried no signal. The healthcare agency founder would have gotten more competitive value from spending one week writing down his specific approach to compliance system migration than from three months of AI-generated generic content.

The practical path isn't sequential. It's parallel. You don't stop using AI while you figure out positioning. You start the positioning work immediately and restructure your AI usage around whatever opinions you can articulate right now.

The One-Week Sprint

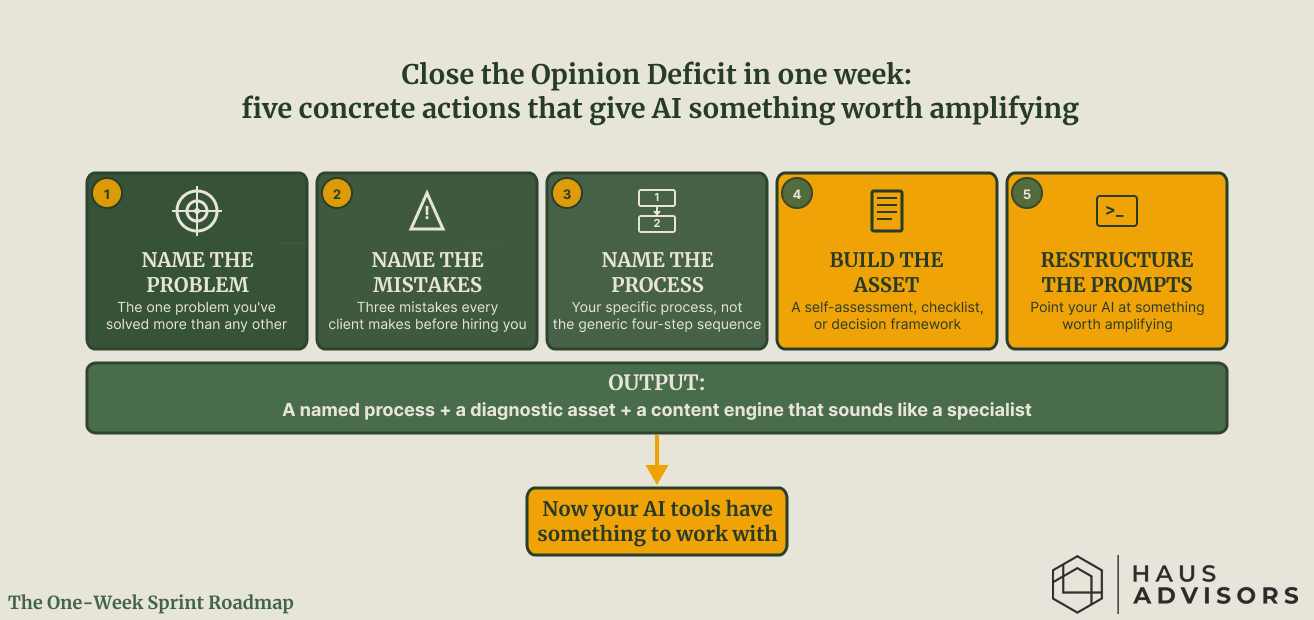

Here's what that looks like for the agency founder who needs to move this week.

This isn't a four-year foundation project. It's a five-day sprint. At the end of it, you have a named process, a diagnostic asset, and AI prompts that produce specialist-quality output instead of generic content. The seed is small. What it gives AI to amplify is not.

Write down the one problem you've solved more times than any other. Not the broadest problem. The most specific one. If you've rebuilt legacy healthcare systems seven times, write that down. If you've migrated e-commerce platforms off Magento for mid-market retailers four times, write that down.

Name the three mistakes you see every client make before they hire you. These are your earned insights. You know them because you've seen the pattern. A founder who's rebuilt healthcare systems seven times can name the architectural mistakes in their sleep. Write them down in plain language.

Name your process. Not a generic "discovery, design, development, deployment" sequence. Your specific process for solving this specific problem. What do you do first that other agencies skip? Where do you spend more time than competitors because you've learned it matters? Give the process a name. It doesn't have to be clever. It has to be specific.

Build one diagnostic asset. Take those three common mistakes and turn them into a self-assessment, a readiness checklist, or a decision framework. This is the asset you'll offer in outbound emails, reference in proposals, and publish on your site. AI can help you produce this quickly, but only after you've done the thinking about what goes in it.

Restructure your AI prompts. Stop asking AI to "write a blog post about digital transformation." Start asking it to "write a blog post explaining why most healthcare system migrations fail at the data layer, based on our framework that identifies the five most common architectural mistakes in legacy patient portal systems." The output from the second prompt is unrecognizable compared to the first. Same tool. Different inputs.

That's a one-week sprint. Not a four-year foundation project. At the end of it, you have a named process, a diagnostic asset, and a content engine that sounds like a specialist instead of a generalist. It's not the finished product. It's the seed. But it's a seed that gives AI something worth amplifying.

The Next Step

The Opinion Deficit connects to every other growth channel an agency operates. The diagnostic framework you build for AI-enhanced content is the same asset you offer in precision outbound emails. The named methodology that structures your AI-generated proposals is the same methodology that makes your conference talks worth attending. The case studies your AI helps you produce are the same case studies that make referral conversations specific instead of generic.

Positioning determines what opinions you develop. Productization is where opinions get encoded into repeatable systems. Publishing is how those opinions reach the market. AI accelerates all three, but only when the underlying expertise exists.

Start with the one-week sprint. Name the problem. Name the mistakes. Name the process. Build the asset. Then point your AI tools at something worth amplifying and watch the output change.

The principle is this:

There are agencies using AI to amplify their opinions, and there are agencies using AI to produce more of nothing. The first group started with positioning. The second group started with tools and hoped the opinions would follow. They won't. They never do.

At Haus Advisors, we help dev shops and technical agencies build the positioning foundation that makes AI investment actually compound. The Opinion Deficit isn't fixed by better prompts or more subscriptions. It's fixed by doing the work that gives you opinions worth amplifying. If your AI tools are producing volume but not advantage, I'll record a free Loom teardown showing you exactly where the gap is and what to build first. Book a strategy call here →